An insider’s view…

Written By: Mike Medoff, Co-chair of JT 62443-4-1

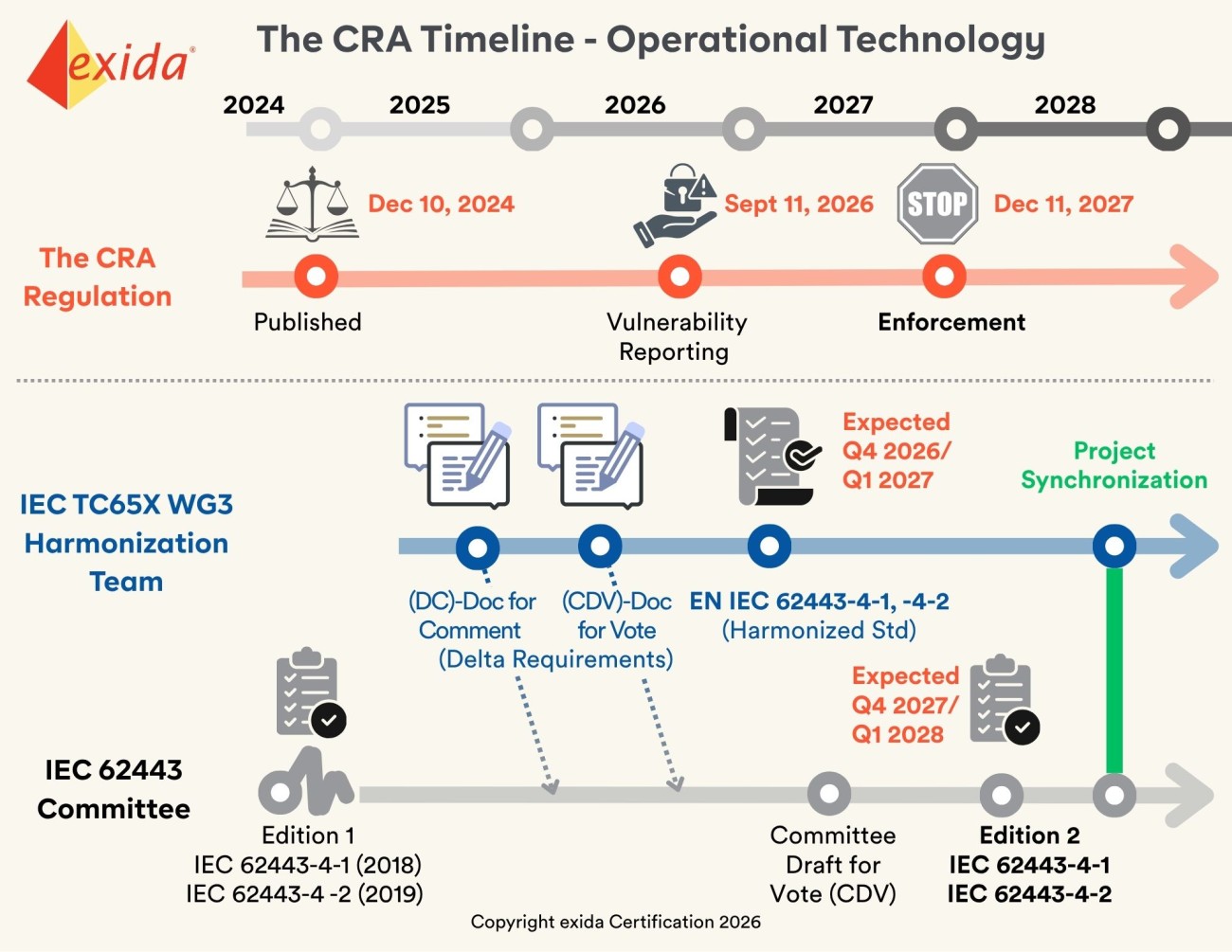

The clock is ticking for manufacturers selling products with digital elements into the European Union. By December 2027, compliance with the Cyber Resilience Act (CRA) becomes mandatory—meaning if your product doesn't meet these strict security laws, you won't be able to sell it in the EU market. While many operational technology (OT) manufacturers rely on IEC 62443 today, standard compliance alone is not enough to guarantee legal entry.

This article dives into "Harmonization": the process for creating the harmonized version of IEC 62443, called EN IEC 62443 – which is the regulatory bridge to CRA. We explain the timeline, the upcoming "Delta" requirements, and why the current roadmap may leave manufacturers with less than 12 months to prove compliance before the deadline. Starting your alignment today isn't just a best practice—it’s a business necessity.

What it Means

Compliance with today’s IEC 62443 standard is NOT sufficient evidence to claim conformity to the CRA regulation. Additional provisions in the harmonized version (EN IEC 62443), currently in development, will need to be addressed. This article explains the harmonization process, timeline, and what it means for manufacturers that currently follow IEC 62443 4-1 and 4-2.

As a manufacturer you won’t be able to sell your products with digital elements in the EU after December 2027 without first demonstrating CRA conformity. But the finalized roadmap to conformity, the harmonized version of 62443, won’t likely be available until the end of 2026. This means manufacturers could have less than 12 months to prove compliance against the harmonized standard. The best way to address this short window is to start today using the following resources:

- Current IEC 62443 standard,

- The CRA language itself,

- Insights gained from participating in the harmonization process (such as "Delta requirements").

How did we get here / Current Situation

The CRA (law), introduced in December 2024, is written in legal terminology, which means it is difficult to use for evaluation of compliance. This is where standards such as IEC 62443 come in. But since IEC 62443 was created in 2018/2019 prior to the CRA it does not contain all of the guidelines needed to comply with the law.

This means a manufacturer could be fully compliant today with IEC 62443, but not meet all of the CRA’s requirements. They would be prevented from offering the product for sale in the EU.

A version of IEC 62443 is needed that includes all the necessary requirements for compliance with CRA. This version is called EN IEC 62443 (where “EN” standards for European Norm) and is created via the harmonization process.

Harmonization Creates a Presumption of Conformity

In the context of the CRA, the word "harmonization" is more than just a synonym for "alignment." It refers to a specific regulatory mechanism used by the European Union to ensure that different laws and technical standards work together without conflict.

The goal for harmonization is to create a presumption of conformity. This means that if you comply with the harmonized version (EN IEC 62443), then you are presumed to conform to the relevant requirements of the CRA – no questions asked.

The Result: If your product meets the harmonized version of IEC 62443 (EN IEC 62443), then it is legally presumed to be in compliance with the CRA. You don't have to prove your security from scratch; you just have to prove you met the standard.

Harmonization also ensures that vertical standards (specific to industrial OT) don't contradict horizontal requirements (general vulnerability reporting or SBOM mandates). The CRA is a horizontal law because it applies to all digital products.

Potential Confusion in the Marketplace

The harmonization process will result in two different versions of the standard being present for a time (IEC 62443 and EN IEC 62443). This can create confusion in the marketplace.

In some countries (like Australia) compliance with IEC 62443 is an accepted means for complying with national law - the Security of Critical Infrastructure (SOCI) Act. A manufacturer that complies with IEC 62443 and Australia’s SOCI would not be able to sell their product into the EU based on IEC 62433 conformance. The manufacturer may need to go through a second audit process to show compliance with EN IEC 62443.

Harmonization Process

The IEC’s TC65X WG3 group has been tasked by CENELEC with harmonization of IEC 62443-4-1 (2018) and 62443-4-2 (2019). Their mandate is to document what changes are required to align with the CRA. This is currently being approached by creating a “Delta” Requirements document – a list of changes (modifications, deletions, and additions). It is currently on its second iteration. Upon completion the EU national committees will publish merged documents (EN IEC 62443 with Delta Requirements incorporated).

There are two types of changes proposed by the TC65X team:

- Changes to align IEC 62443 with the CRA

- Changes to improve / enhance IEC 62443-4-1 and -4-2, which were issued in 2018 and 2019 respectively.

The Delta Requirements document is shared for feedback with the JT 62443-4-1 and -2 teams (composed of ISA 99 and IEC TC 65 WG10 members) who are responsible for updating the IEC 62443 standard. It provides a preview of the potential changes.

The Delta Requirements documents are the advanced insights that allow companies to be proactive - prepare now instead of waiting for the final EN release.

Minimizing Divergence Between IEC 62443 and EN IEC 62443

The long term goal is to make sure that IEC 62443 and EN IEC 62443 don’t stay different forever. One of the keys to achieving this goal is to understand the impact of the changes before they are final. The two co-leaders of the harmonization team (TC65X WG3) are also members of the ISA-99/IEC 62443 committee. The leaders from these groups meet periodically to discuss the proposed changes and to stay in synch.

The JT 62443-4-1 and -2 committees are also working in parallel on updates to IEC 62443. The changes resulting from harmonization are documented as “comments” for review and consideration by the committee. In an ideal world, the JT committees would be able to accept all of the changes introduced by the CRA (all of the Delta Requirements). This would make the future version of IEC 62443 identical to EN IEC 62443.

There will be strong motivation to accept all the harmonization changes. If we don’t, then we will end up with two versions, which no one wants. But IEC 62443 is a consensus standard, meaning that changes require approval by a consensus of the voting members. It’s hard to predict how the entire committee might vote – it is made up of end users, manufacturers, consultants, integrators… all of whom have different interests.

The TC65X harmonization team shares the vision of a common EN IEC document (not different versions). They will review the 2nd Edition of IEC 62443-4-1 and -4-2 and hopefully accept as is. If there are still regional requirements that have to be there for the CRA, then a reduced-content COM MOD will be needed.

CRA Timeline

| Date | Swimlane | What it Means |

|---|---|---|

| Dec 10, 2024 | CRA | CRA enters into force (20 days after publication) |

| Q3 2025 | EN Harmonization | First Rev of Delta Requirements issued for review (DC) |

| Q2 2026 | EN Harmonization | Second Rev of Delta Requirements issued for review (CDV) |

| June 11, 2026 | CRA | Certification Infrastructure becomes available – Legal Framework for Notified Bodies |

| Sept 11, 2026 | CRA | Start of Mandatory Vulnerability Reporting Obligations |

| Q4 2026 or Q1 2027 (Expected) | EN Harmonization | EN IEC 62443 (Harmonized standard) is released (FDIS) |

| Q2 2027 (Expected) | IEC 62443 Committee | Draft of IEC 62443-4-1 and -4-2 available for Comment & Vote (CDV) |

| Dec 11, 2027 | CRA | Manufacturers must have updated CE Markings on Products (have completed certification to the EN) |

| Q4 2027 or Q1 2028 (Expected) | IEC 62443 Committee | IEC 62443-4-1 and -4-2 Edition 2 are released. Contains updates based on the EN (harmonized version) |

| Q2/Q3 2028 | EN Harmonization, IEC 62443 Committee | Project Synchronization |

Abbreviations:

CD: Committee Draft

CDV: Committee Draft for Vote

FDIS: Final Draft International Standard

How exida can help make you “CRA-ready”

As the timeline indicates, manufacturers have a limited window to achieve CRA compliance. Our recommendation is for manufacturers to leverage existing 62443 compliance activities plus review / understand / address the Delta Requirements.

exida has several people involved in the harmonization process, which provides a unique advantage in navigating these "moving target" requirements. As the co-chair for JT 62443-4-1, I regularly meet with the harmonization team leaders and review / discuss the Delta Requirements. This puts exida in a great position to advise clients on the potential impact of harmonization so that they can be ahead of the curve when the official EN is released.

We are the longest running ICS cyber security certification organization (since 2011) and have performed many IEC 62443 certifications to date.

exida is also a Notified Body (NOBO), chartered with assessing whether products meet strict European safety requirements before entering the market. By achieving NOBO status for the Cyber Resilience Act (CRA), we will gain first-hand insight into the exact evaluation criteria and expected documentation standards for CRA conformity.

This unique "assessor's perspective" allows us to guide customers through readiness activities that minimize risk that your conformity assessment will be delayed or that you won’t pass. We know what it takes for you to pass conformity assessment – whether you are preparing for self- or 3rd party assessment.

References / Other Information on CRA

Blog: How IEC 62443 Can Help Achieve Compliance with the EU Cyber Resilience Act (CRA)

Tagged as: iec 62443 IEC 62443 cybersecurity certification CRA