In this blog, I will talk about the FMEDA method and how it can generate realistically accurate failure rate data.

The first question we have to ask is “why do you need failure rate data ?”

One of the fundamental concepts in today’s functional safety standard, IEC 61508 and it’s derivative works, is probabilistic analysis of any given safety function design. You can do probabilistic analysis only when you have failure rate data for all the products that are installed or might be installed.

Getting Failure Rate Data

Where does one get failure rate data? For that we have industry databases.

Today, the OREDA (Offshore Reliability Equipment Database Association) provides excellent data. Company groups get together and voice their opinions about what numbers to use. Manufacturers can do field return data studies , which provides useful information, but has limitations in terms of absolute failure rate.

There’s a technique called B10 data from ISO 13849, the machine safety standard, but it has some severely limiting assumptions.

The FMEDA technique developed by engineers at exida during the 1990s. There is also end-user field failure data studies which have a potential to offer a great deal of good data if we had a good quality data system in place. So, I strongly recommend such things.

Today, a lot of very useful data is provided by the OREDA database, a consortium of offshore companies in the North Sea. The consortium is operated by the DNV out of Norway. All the data analysis that I have seen has been done by SINTEF in Norway.

It provides a lot of very useful information on process equipment. They published a PDF data handbook which has failure rates, failure modes, and common cause factor estimates for use in safety instrumented function verifications. It’s kept relatively up-to-date with the most recent public release in 2010. What I learned , very importantly , all realistic failures are included. That includes both failures due to the product design, manufacturing, and failures do to site operational procedures… including things like maintenance errors and exceptional stress.

What was very clear to me after traveling to Norway and attending a safety seminar where the subject of OREDA vs. FMEDA was debated, OREDA felt strongly that all real failures must be included in PFD average calculations in order to show realistic results.

They mean both product related failures like manufacturing defects, design weaknesses, unexpected stress events that weren’t anticipated by the designers, failures of random support equipment and site specific failures like maintenance errors, testing and calibration errors, and exceptional unexpected high stress events.

According to the discussion I was involved in Norway, a number of people said all of these failures are included, and it should be.

Getting Failure Data

What about company and group failure rate data estimates?

Typically a group of experienced engineers share their memories of failure events and estimate failure rate. It’s not a real scientific approach. Results definitely very on who’s in the room and their specific experience, but the results can be valuable especially for comparison purposes.

For example, a committee in Germany from the NAMUR chemical industry group, published NE-130 where they publish failure rates for sensors, logic solvers, and final elements.

They published dangerous undetected failure rates for a final element assembly of 400 FIT. Interesting, I don’t have any idea how they arrived at this data, but it’s a piece of data we can use to compare and gain information from.

What about Manufacturers field return data?

The biggest problem with manufacturing field data is the manufacturers cannot be certain what percentage of actual failures are returned. Manufacturers cannot definitely know how many field operating hours exist. They know shipping dates, but they don’t know how long it takes to install an instrument. They don’t know if it’s been sitting on a stock room shelf or not. There’s a number of variables here. I’ve seen some very optimistic calculations done by people in manufacturing companies. I will admit that I was taught to do those optimistic calculations when I worked for a manufacturer. In spite of the fact, I’m skeptical about using this as an absolute failure rate. I find that the data can be very valuable. There’s a lot of really useful information in this data. We should definitely include it in the input to any failure analysis system.

B10d (Cycle Test) Failure Data

I don’t know if you’ve even heard of this before. It comes from machine safety not process safety.

The B10 method uses cycle testing. The concept is that a cycle test is done on a set of products. It’s typically most useful for mechanical and electromechanical products. You cycle them on and off as fast as you can.

Given a set of products, typically a number greater than 20 , you run the test it until 10%. Or if the test set was 20, until 2 of them fail. The number of cycles until failure is called the B10 point. This means the number of cycles (10%) failed. So, you can convert the number of cycles to a time period by knowing the cycles per hour in any particular application. You calculate a failure rate dividing the time period by 10% of the failure count or two failures divided by the time period.

The dangerous failure rate is made safe by disassembling a 50-50 split between safe and dangerous. The problem with this method is that it assumes that all constant failure rates during the useful life is due to premature wear out. Typically due to manufacturing defects of product weaknesses. In the end, cycle testing was designed to predict the useful life of a mechanical product. So, it’s primarily used to predict useful life or wear out point.

It is interesting that someone thought that the method could be used to predict a constant failure rate, but the primary assumption is all other failure modes are completely insignificant compared to premature wear out. That’s interesting but not at all true for any mechanical product.

For example, in static motion. In fact, some failure modes become very significant when a product sits motionless for 100 hours. That’s less than a week! If you’re sitting there for four days, stiction begins to form. That can cause dangerous failure rates. B10 data would not apply. So generally speaking, while it can be used in high-speed machine safety applications, it’s totally inappropriate for process industries.

FMEDA

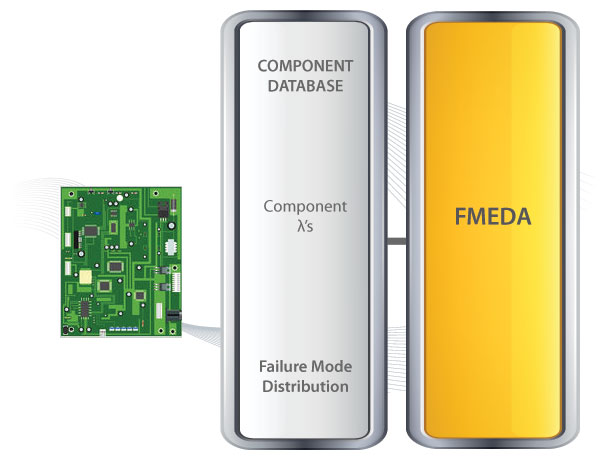

FMEDA is a predictive failure concept that can be used on brand-new designs based on the design strength. It was derived because enough failure statistics at the product level had not happened yet. Neither manufactures, nor end-users, nor engineering companies do a really good job of gathering up failure statistics. And if I do, the statistics aren’t available until the product is obsolete. Think about the usefulness of that. Therefore, given that we don’t have enough failure statistics at the product level, where do we have failure statistics? Well, at the component level. We break a design into its components and perform a detailed study of each component.

It’s truly a study of design strength. It’s a tedious process. Some people consider it a very boring process. Crazy people like myself enjoy this kind of work. Every single part… You review it . See if this part fails in this mode… If this capacitor fails… open circuit…

How does that impact the design? Does the device continue to operate? Does the device fail? If it does fail, does it failsafe? Or does it fail dangerous? If it does fail in one of those modes, can the diagnostics detect that failure?

Using the component database that has failure rates, failure modes , and useful life, the FMEDA product generates a product failure rate as well as product failure modes, diagnostic coverages, and even useful life. Using a good component database, these numbers can be determined and predicted more accurately than with only manufacturers field data on previous products.

The FMEDA process can generate very detailed results that can clearly distinguish between different designs of mechanical, electronic , and electromechanical devices.

FMEDA - Biggest Negative

You can imagine the biggest negative of a FMEDA and that’s a component database.

I’ve heard the term “garbage in - garbage out” for decades and , of course the accuracy of the FMEDA itself depends on the accuracy of the component database. Therefore, it’s very important that any component database be verified. It’s what we might call “calibrated”.

At exida, we gathered over 100 billion unit operating hours of field data primarily from the process industry. We compare this field failure data results to the FMEDA results on a product level. We do it over and over and over again hundreds of times. Every time there’s some fundamental difference between the field failure data, even manufacturers field failure data, and the FMEDA results, we have to explain the differences. Sometimes we discover components are being used differently than we anticipated and we add a new component to our component failure database.

Sometimes we discovered that the field failure data collection system has some fundamental flaw. We re-analyze the data based on excluding or including additional information. The key is, it’s a closed loop feedback system which over time has developed a relatively accurate methodology for predicting future failure rates.

Public Component Database

It is important that this component database be made public and exida sells this making it available to people.

One primary reason is so you can criticize us. People have purchased our database and called up and said “You know this component and this failure rate and I think it’s wrong!”. We will talk to them. We will reconcile the differences and improve the database.

Comparing FMEDAs

FMEDAs, of course, depend on this component database and not all FMEA / FMEDA results are the same. Compare all valve failure rates. We have some exida numbers. 4.83E-7 and 1.35E-6 . The difference is the application. Is it a full stroke application where you can tolerate leakage? Or is an application with tight shutoff?

I think most everyone would understand that the tight shutoff applications has a higher failure rate because many of the potential degradation mechanisms of the seal will be a failure in the tight shutoff application. I compare this to another set of failure rate numbers I saw published on the certificate of a well-known German certification body. They had a number of 6.55E-8. My goodness! That could be two orders of magnitude or at least an order of magnitude too low. The problem with that, of course, it’s dangerous!

What about actuators?

We have a couple exida numbers: A spring return rack and pinion actuator and a double acting rack and pinion actuator. You see the 4.29E-07 and the 6.84E-07. That’s failures / hour. Another set of data based on a FMEA quote made based on manufactures warranty data showed 8.54E-09. That’s approaching two orders of magnitude too low. That’s not good. If some one uses a particularly optimistic failure rate, they could be designing system that is not safe enough.

How do you know these numbers are no good? There’s only one way to compare numbers with actual field failure returns NOT manufacturer’s warranty data.

Comparing FMEDA vs. OREDA

The first thing we did at exida was we compared the FMEDA results to OREDA. We did this primarily by attending a conference in Norway where this was the subject of debate. There were many engineers from the OREDA analysis team, an engineer from exida, and a number of end-users in the audience. It was very clear to me from listening to the debate , OREDA included more than just product failures.

OREDA included more than exida uses and a FMEDA. When we started studying the numbers, we discovered that they kind of answered a question we had from years ago when some of our engineers did a field failure study where we looked at the failure rate from site to site. We discovered the ratio was about a 4X. Why would that be? A 4X failure rate difference of the same piece of equipment from site to site?

A service engineer went to that site and discovered some obvious systematic issues. The issues were removed from the analysis. Then the ratio of the failure rate varied about 2X from site to site. Quite frankly, this was the same industry, the same company operating under the alleged same procedures. The environmental variables were almost identical from these two sites yet the failure rate was 2X difference. During the OREDA conference, it was very obvious to me that some of the failures, like half the failures, OREDA was using were site specific.

Failures: Product vs. Site

What we are talking about is the difference between these product failures and the site specific failures like maintenance errors, testing , calibration errors, unexpected stress events, etc. The exida view is ,of course, all real failures must be included in a realistic PFD calculation, but we modeled them differently.

Site Failures

We use the term “operational safety culture”. We have a method created to evaluate the operational safety culture of a company. Then we use that model in our exSILentia tool to adjust the failure rates as well as probabilities of successful repair, probabilities of successful proof test, and a number of other important variables that are impacted by site specific policy.

This, more accurate way of modeling operational safety culture, gives credit to the people who are doing a good job. It also properly penalizes those who are not doing a good job. Rather than taking the average of all of them, which is what you get when you come up with a single failure rate.

End User Field Failure Studies

End-user field failure data studies represent a rich opportunity. Wow! If we could get sufficient data recorded on a specific site and we could analyze it , we could come up with some REALLY good failure rate information. We could also identify even more variables that might be solved.

The problem is insufficient information, but I would never turn down an opportunity to look at a field failure study. There’s always very useful information in there. exida is quite blessed to be able to have studied several dozen field failure studies from the chemical, petrochemical, offshore industries, and one nuclear (which was really full of rich, valuable data). We use that as input to our system to help make certain that our FMEDA results are reasonably accurate.

We do have a lot of insufficient information like:

- When is a failure report written?

- What’s the definition of a failure?

We had one site , for example, where I was able to go with the maintenance technician when proof testing was done. The person would check calibration of a sensor. If they were able to recalibrate it, they would not mark it as is a failure even though initial calibration might have been sufficiently out to cause dangerous failure in the safety function. Those should have been marked as a failure, but they were not. Their particular data analysis came out much more optimistic than exida because the as found conditions were not properly recorded. Many times the operating conditions are not record, but things are getting better.

Field Data Collection Standards

There are field data collection standards available today from ISO to IEC to NEMUR . Even though it’s not a standard, the AIChE center for chemical process safety has formed a process equipment reliability database committee. They have developed methods for collecting data and these all indicate help and promise for the future.

Field Data Collection Tools

The other big thing that I see happening right now is the number of field data collection tools on the market. At exida, our tool is called SILStat. It’s preloaded with failure taxonomies, a simplified picklist, and exida offers a data analysis service using our predictive analytics technique. The data output reports are even compatible with PERD.

All this means to me is very simpe. We have data. I’d like it to be better. It’s going to be better.

Comparing Failure Rates

Check out the video below for an in-depth explanation on comparing failure rates.

These kind of comparisons are the things I’m talking about when I say compare the failure rates due to field data FMEDA results. At exida, we do this frequently. We use all the data we can get… Manufacturer’s warranty studies, end user field failure studies, and we look for relative accuracy.

We do our best to gather as much information as we can. This past year we have visited BASF, Dow, DuPont, Exxon Mobil upstream, Exxon Mobil downstream, and Air Products. We still have a number of other companies we’re going to visit looking for more failure rate data. We’re going to do more comparisons. If we find problems, we will take action and explain the differences. That’s how you get the FMEDA component database calibrated to produce realistic, accurate data.

I conclude that in the process industries OREDA is a valuable asset to all of us. Those companies that participate in this data aggregation effort should be congratulated.

Manufacturer warranty data is a source of valuable information, but not that useful for absolute failure rates.

The B10 cycle test approach is totally inappropriate for any process industry applications. Unfortunately, there are a lot of people still using it out there. I doubt those people would pass the CFSE exam.

The exida FMEDA database is predictive to provide relatively accurate failure rate data about a product, but the FMEDA technique does not produce reliable failure rate data for site specific failures. At exida, we use a operational safety culture approach for that.

We’re hoping for good and more quality site data and I’m convinced we’re going to get some as the tools become more wide spread.

So, in summary, I think the field failure rate data calibrated FMEDA results provide accurate failure rate data for process industries. FMEDA results can provide strong predictions for brand new products long before field failure results are available.

FMEA / FMEDA results based on manufacturer’s warranty data are optimistic. It should never be used to verify realistic operating conditions. The operational safety culture should absolutely be included in random failure probability analysis. Such failure rates are not part of product failure rate numbers.

If your not using the exSILentia tool, l I do recommend that you take the FMEDA results and multiply by two since several field failure studies have indicated that’s appropriate.

Tagged as: SILStat OREDA IEC 61508 FMEDA Failure Rates exSILentia EMCRH B10